Cover of the 1972 Edition of Perceptrons

Image processed cover of the 1972 Edition of Perceptrons.

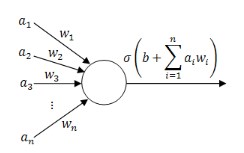

exceeds a threshold or not. Here a1 .. an are n inputs to the perceptron, and w1 .. wn are the corresponding n weights. Rosenblatt investigated schemes whereby the magnitudes of the weights would be altered under (supervised) training. Rosenblatt did not develop a formula for describing the training of any other than single layer neural networks (in modern terminology). The famous backpropagation formula had yet to be developed. Multiple input, single output perceptrons are commonly used as processing elements (PE) in Artificial neural Networks.

- Learn to simulate the XOR Gate

- Distinguish on the basis of (digital) connectivity such figures as

|

and |

|

|

|

|

What IS controversial is whether Minsky and Papert shared and/or promoted this belief.

Following the rebirth of interest in artificial neural networks, Minsky and Papert claimed - notably in the latter "Expanded" edition of Perceptrons that they had not intended such a broad interpretation of the conclusions they reached re Perceptron networks.

However, the writer was actually present at MIT in 1974, and can reliably report on the Chatter then circulating at MIT AI Lab.

But what was Minsky and Papert actually saying to their colleagues in the period immediately after the publication of Perceptrons? There IS a written record from this period: Artificial Intelligence Memo 252, January 1, report

Marvin Minsky and Seymour Papert,

- "Artificial Intelligence Progress Report:

Research at the Laboratory in Vision,

Language, and other problems of Intelligence"

A recent check found this report online at http://bitsavers.trailing-edge.com/pdf/mit/ai/aim/AIM-252.pdf

Starting at page 31 of the "progress Report" there is a very brief overview of the book Perceptrons. Minsky and Papert define the PERCEPTRON ALGORITHM as follows:

-

PERCEPTRON ALGORTITHM: First some computationally

very simple function of the inputs are computed,

then one applied a linear threshold algorithm to

the values of these functions.

-

GAMBA PERCEPTRON: A number of linear threshold

systems have their outputs connected to the in-

puts of a linear theshold system. Thus we have

a linear threshold function of many linear threshold functions.

-

Virtually nothing is known about the computational capabilities

of this latter kind of machine. We believe that it can do

little more than can a low order perceptron. (This, in turn,

would mean, roughly, that although they could recognize (sp) some

relations between the points of a picture, they could not handle

relations between such relations to any significant extent.)

That we cannot understand mathematically the Gamba perceptron

very well is, we feel, symptomatic of the early state of development

of elementary computational theories.

It is noted as a technical sidepoint, that Minsky and Papert restrict their discussion to the use of a "linear threshold" rather than the sigmoid threshold functions prevalent in contemporary neural networks.

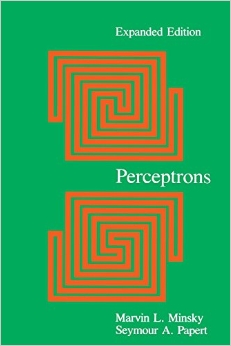

Cover of the Expanded Edition of Perceptrons Published in 1987 The cover differs topologically from that in earlier editions as the lower figure details a simply connected region. |

Cover of the Expanded Edition of Perceptrons after segmentation showing that in the lower figure the curves outline a single connected region while in the upper figure there are two unconnected regions. |

:

:

About the author: Dr Harvey A Cohen

- Gained a PhD in Theoretical Physics from the Australian National University (ANU) 1965

- Postdoctoral at Mathematical Physics, University of Adelaide 1966-69.

- From 1970 onwards at newly founded La Trobe University, Melbourne.

- His PhD and early theoretical research papers were in QED calculation machinery ("bypassing renormalisation"), higher spin particles, and general relativity.

- Teaching mechanics to applied mathematics students at La Trobe from 1970 onwards lead to recognion that undergraduate students could often parrot "solutions" yet seriously lack physics understanding. Found successful application of key heuristics essential to physics maturity.

- Developed set of qualitative puzzles in qualitative physics called dragons that distinguish the tyro from the physics expert. Dragons were amazingly similar in scope to Piaget's Conservation puzzles that distinguish four year old "non-conservers" from six year old "conservers". Cohen collected protocols of his subjects "loud thinking" their attempts, and analysed the approach taken in terms of heuristics.

- Invited to join Papert's LOGO Group during 1974 during which wrote MIT AI Lab Memo 338.

- Was invited (with travel costs paid) to attend Loud Thinking Conference at MIT Dec 1975 - Jan 76 with leading AI researchers.

- Enroute to MIT visited the (second) computer store in El Camino Real, Silicon Valley, to see the very first kit microcomputers, Atari and Poly-88 (Pre-release). Actually carried copies of Byte to MIT for the Loud Think In, giving one to Marvin Minsky.

- Back at La Trobe University in 1976, Cohen started a microcomputer-based educational Robotics Project called OZNAKI (Polish for LOGO ). This involved developing a comprehensive microprocessor software development system, a macro-based language for developing portable code, and of course turtle robot. By June 1977 had implemented, with research student Rhys Francis, the first micromputer "tiny" LOGOs - which was described in IEEE Computer, and then notably the file: Cohen/Francis paper on OZNAKI was reprinted in a special omnibus compilations of papers that included articles by the architects of the first 16-bit microprocessors (the Intel 8086, Motorola 68000) and by Alan Kay (Dynabook concept), Adele Goldberg (SMALLTALK).

- 1978-9 was visiting professor in the Division of Research and Education at MIT - a MIT area that was transformed into the MIT Media Lab.

- In 1984 conceived and implemented a notable early communicator for the severely disabled non-vocal and oversaw the construction of a battery of ten such Communicators for use in Special Education in Melbourne.

- Was developer of software for the Australian microcomputer the MicroBee -- released 1983 -- notable a "tiny"LOGO called OZLOGO.

- The pioneering paper(s) on digital image segmentation were written by Azriel Rosenfeld and J. Pfaltz in 1966 (and 1968), yet were not cited in Perceptrons (first edition 1968). Its therefore worth noting that Cohen extended the (two pass) Rosenfeld-Pfaltz algorithm by producing a single-pass segmentation algorithm for binary images, and subsequently a single pass segmentation algorithm for gray-scale images. {See Papers [90.10] and [93.4] here}.

- Cohen still active, acting as Technical Chair of an IEEE Workshop on Sensors and Energy Harvesting, held in Melbourne, December 2017.

|

Married to Dr Elizabeth Essex-Cohen,

pioneering Space Physicist.

In 1974 Harvey was in the MIT AI Lab while Elizabeth was working on the future GPS in the US Air Force Geophysics Lab outside Boston. |

|

| Link to Harvey Cohen's Personal Website |

|

| Link to Glimpses of OZNAKI |

|

| Link to About Dragons |